You’re Buying AI. Who’s Managing It?

Most small and mid-sized businesses are buying AI tools, but only 25% are using AI daily as part of regular operations — and just 15% have fully integrated it into how they run their business. The gap isn’t about technology. It’s about management.

I’ve watched businesses buy technology for 25 years. The pattern repeats: a new category emerges, vendors push urgency, companies buy in, and almost nobody checks whether it actually works. Two years later, the budget is bigger, and the problems are still there.

The Ruby on Rails Problem

In 2015, a lead developer convinced a client to switch their online clothing store from PHP to Ruby on Rails. Lacking a Ruby developer network, the freelance help became expensive and led to more problems. Three years later, the lead developer quit, the code was neglected, and they returned to PHP.

AI fits the same cycle. Developers get excited about new technology. That’s not a criticism, it’s just how developers work. But excitement about the tool is not the same as fit for the business. Ruby, Mesos, CoffeeScript — a graveyard of technologies that made perfect sense at the time.

AI follows this familiar pattern, but the effects extend further. Unlike new languages or databases, AI adoption changes workflows, staffing, and collaboration. The disruption runs deeper than a language migration.

AI Adoption Won’t Manage Itself

I recently shifted rackAID from a managed service provider to a consultancy, relying on AI and bringing in freelancers. Results were slow and didn’t fit, so I let them go.

Frustrated after several rounds of revisions, I used AI myself. Three hours later, I was done.

I’m a one-person shop. If you’re running a team of 50 or 200, the stakes are a lot higher.

Companies with a structured approach to AI adoption are three times as likely to actually use their tools. One study found that companies with a real plan scored 85% on AI readiness frameworks. Companies just stacking AI subscriptions scored 32%. That gap doesn’t close on its own.

The reason is simple. Nobody is asking the right questions:

Do your employees know how to get results from the AI tools you’re paying for? What workflows will benefit most from AI? Does your team know how to catch AI errors before they become problems?

In my own AI adoption, I identified clear bottlenecks in my workflow. I built an agentic workflow to help me write this content. I built a dashboard to surface data and metrics from a half dozen legacy systems. I set up an AI agent system so that when new opportunities arise, I can easily automate them.

I’ve talked with managers at mid-sized companies — a coffee roaster, a hospital cafeteria, a pet food company. Most are still using ChatGPT in the browser. No plan. Nobody knows when AI makes sense and when it doesn’t. That’s not an AI problem. That’s a management problem.

Oversight isn’t complicated. It just means someone is actually paying attention.

The Pilot Trap

Starting with a focused pilot in one department typically delivers three to five times faster ROI than a company-wide rollout. That finding holds up across multiple studies and matches what I’ve seen. The reason is straightforward: pilots have a clear goal, someone tracking results, and a tight timeline. In other words, they have an AI manager.

The trap comes after the pilot.

The pilot works, everyone gets excited, and then the rollout skips the discipline that made the pilot succeed. More budget, less oversight, a fuzzy plan to scale up.

I saw a firm nail a pilot to automate their intake process. Processing time was cut in half. The team was happy. Six months later, they’d spent a lot more rolling AI into four more departments. Nobody was measuring results. Two of those projects were actually making things worse. An outside review was needed to catch it.

The real question isn’t whether to run a pilot. It’s who is watching when the pilot ends. Is anyone still measuring when the excitement is gone?

Guessing ≠ Results

I’ve seen a few patterns in companies that actually get results.

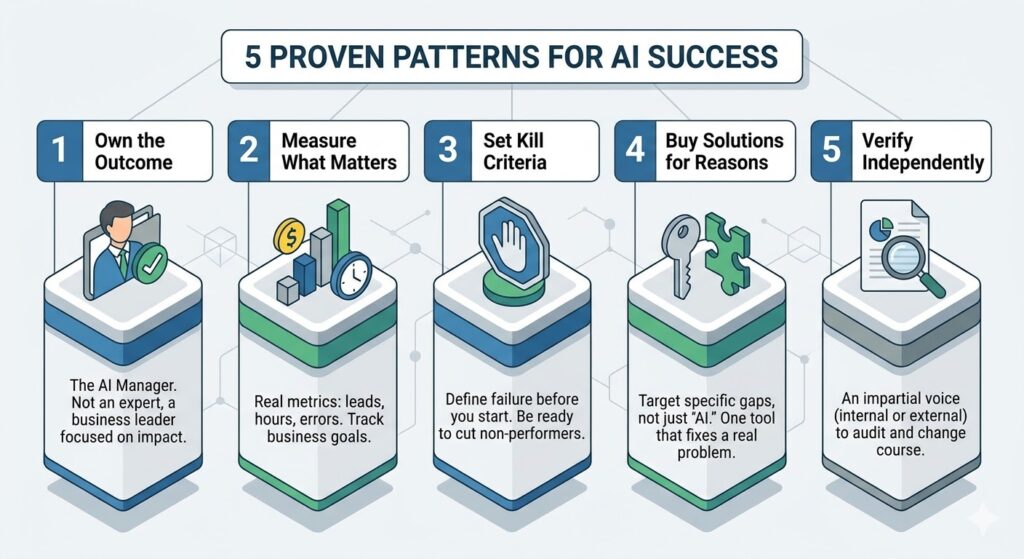

Someone has to own the outcome. That’s your AI manager. Not the person who picked the tool or signed the contract. Someone whose job is to measure whether the investment is producing real business value and fix it if it isn’t.

An effective AI manager isn’t an AI expert.

They’re someone who knows enough about AI to spot vendor hype, and enough about your business to know where it can actually make an impact. They have to think independently and be free to push for or against AI integration.

Measure something real. “We use AI” isn’t a metric. Leads generated, hours saved, errors reduced — those are metrics. Track them. If you can’t say what improved and by how much, you’re guessing.

To pick the right metrics, start by identifying what business goal you want to impact. If your priority is faster response times, measure average handling time. If your goal is more qualified leads, track conversion rates. Choose a few metrics that reflect real improvements, make them simple to track, and revisit them as you learn.

Set kill criteria before you start.

If the tool doesn’t hit the goal, you cut it. Say you add AI to your support queue and satisfaction drops 10%. If that’s your kill criterion, you know to stop. Without it, you’ll keep paying because canceling feels like admitting a mistake.

Buy solutions for a reason. One tool that solves a real problem beats five that just sound impressive. Most AI goes underused because companies buy “AI” instead of something that actually fixes a gap. If you know your sales leads aren’t qualified, buy a tool that does just that. Don’t buy an AI-enabled CRM. The tech is too new. You’ll have the greatest impact with tools built for your specific challenge.

The companies getting the most from AI have someone independent checking whether the tools are working. Sometimes it’s an outside person. Sometimes it’s someone in-house who isn’t afraid to change course. That independence matters.

The Wrong Kind of Urgency

If you figure out AI before your competitors, you get an edge. That’s been true in every tech shift I’ve seen. BCG found that 74% of companies struggle to get real value from AI. The ones who do are pulling ahead.

Buying AI tools isn’t the same as figuring out AI. If you rush to buy without someone managing adoption, or skip setting kill metrics, you’re not adopting AI. You’re just spending faster.

The pressure isn’t to adopt AI. It’s to solve real problems that AI can handle right now. When you focus there, the right tools become obvious. The only question that matters: is this working?

Before You Buy Another Tool, Ask These

If you’re already using AI tools, ask:

- Who is responsible for measuring whether this is working?

- What specifically will improve, and how will you know?

- What would make you cancel this in 90 days?

- Are you buying a solution to a specific problem, or buying an “AI” platform?

- If a vendor or partner manages these tools, how will they determine whether they meet your business needs?

- Is your data clean and unified enough to support what you’re trying to do?

The companies pulling ahead aren’t doing anything secret. They’re asking these questions and insisting on real answers.

Jeff Huckaby · Founder, RackAID

How I use AI in my writing and editing process

25 years in technology. Quoted in Forbes, Inc., and Entrepreneur. I help businesses cut through the AI noise and figure out what actually makes sense.